Is AI Taking Over? A Catholic Educator Breaks Down Facts

Today I am interviewing Daniel Goddu, a software engineer and Homeschool Connections instructor, who is putting together a high school course on Artificial Intelligence. I talked with him about his own interest in AI, where he thinks this tech is taking us, and what students can learn about this important new technology…

Tell us a little bit about your background. How did you get interested in AI?

As a career computer scientist, I am intrigued by many “technical” matters. I can find technical matters in almost anything: movies, restaurants, and construction, to name a few. I remember a commercial for a new Google Pixel phone. It showed features of its camera that let you edit out certain objects from a picture. They were calling it AI. Except it wasn’t AI, it was simply Photoshop on a phone.

Later, I began reading about fanciful and speculatively alarmist quotes from popular scientists about AI. Stephen Hawking, for example, said, “The development of full artificial intelligence could spell the end of the human race.” Elon Musk said, “AI is a fundamental existential risk for human civilization.”

Excuse me, aren’t we talking about software? That we control? Even if we design a system to write software, we ultimately control the kind of software it writes.

So, I dug in, watching a ton of YouTube videos, taking a 16-hour AI course over a weekend, and eventually enrolling in a Certificate in Applied Artificial Intelligence program from Johns Hopkins University/Great Learning. What I concluded was that, while AI is really smart, it’s ultimately still software. In fact, it’s re-purposed software (mostly math, such as statistics, linear algebra, and linear regression) running on re-purposed graphical processing units (GPUs) originally used for gaming. Very clever stuff.

Many people think of AI as a glorified Google search engine, but there’s so much more to it. What are some of the nifty things people are doing with AI in 2026?

AI has achieved significant efficiency gains in internet search engines. No longer do you need to hunt and peck through numerous links to figure out if one of them is the answer you are looking for. Most search engines can now produce a conversational summary with references and links, saving you a whole lot of time finding an answer to your query.

ChatGPT and its competitors are generative AI models. Its roots lie in the customer service chatbots of old, Netflix recommendations, grammar checkers, and email spam filters. These legacy applications have been greatly improved by AI. They are no longer simple rule-based systems (if X then Y…) but rather large language models (LLMs), machine learning models, and deep learning models, which are adaptive pattern-recognition systems. LLMs understand context, can handle follow-up, and are not canned replies but novel responses in a natural conversation. Machine learning models analyze sender reputation, email structure, language patterns, and user behavior. Deep learning (say for Netflix) analyzes watch time, pause/rewind behavior, time of day, and thumbnail preferences. (I bet you didn’t know that about streaming services!) Generative AI (ChatGPT, Claude, Copilot) is the AI that gets the most buzz but accounts for just 11% of the total AI development.

Banks use machine learning models to predict loan defaults and credit card fraud (at application time). Financial services companies use an in-house ChatGPT-like application to more efficiently gather client data and generate better-aligned, more sophisticated investment plans with a specified level of risk.

One of the largest advancements in AI is in image recognition. AI assists doctors not just in reading X-rays, but in predicting patient outcomes and suggesting personalized treatment plans.

Many Catholic parents are vehemently opposed to AI because it enables students to cheat or cut corners on their work. Is there any consensus on what constitutes an ethical versus unethical use of AI?

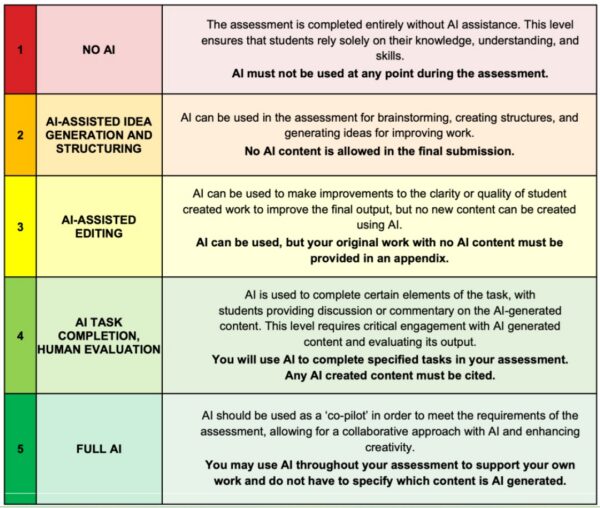

While there is an emerging consensus within academia, there is not yet a general agreement. The current trend is to define the proper use of AI within an existing academic integrity policy. In an article titled “The Artificial Intelligence Assessment Scale (AIAS): A Framework for Ethical Integration of Generative AI in Educational Assessment” in the Journal of University Teaching and Learning Practice, the authors developed “The AI Assessment Scale” (AIAS), which contains a scaling system for the use of AI:

In general, plagiarism rules apply to the use of AI tools because the work they generate is not your own. Using AI without acknowledgment is no different from copying text from an article or encyclopedia and presenting it as if you wrote it. The only real distinction is that AI makes this kind of misuse much easier. The principle remains unchanged. Submitting anything, including AI‑generated material, as your own original work is misleading and constitutes plagiarism. It is best summarized in the Aquinas Writing Advantage Program (AWA) within Homeschool Connections when it says:

“Using someone else’s words (whether a classmate’s, a website’s, or an AI tool’s words) is dishonest and a missed opportunity to develop the teen’s mind and heart. And learning to write with honesty will help your teen become a better communicator, thinker, and disciple of truth.” (https://awa.homeschoolconnections.com/ai/)

What would you say to parents who think, “Learning about AI is fine if you are going into tech, but the average kid doesn’t need to know this stuff”?

A quote often attributed to Ken Olsen, the founder of Digital Equipment Corporation, the maker of minicomputers for business and labs, said, “There is no reason anyone would want a computer in their home.” While this quote was taken out of context, Mr. Olsen was skeptical about home computing. Digital missed the personal computer market and was sold to Compaq in 1998, which was later acquired by Hewlett-Packard in 2002.

At one time, families thought they didn’t need a calculator, a computer, the internet, or a mobile phone. AI tools are on the same path. The question isn’t whether your child will use AI, but whether they’ll understand how it works, what its limits are, and how to use it responsibly. Any tool requires troubleshooting. AI tools will be no different.

There are many prognostications about AI revolutionizing white-collar employment in the near future. Do you think a lot of this is overblown? Or are we really on the cusp of a revolution in how intellectual labor is carried out?

AI is both overblown and revolutionary. It is overblown when public figures make sweeping, imaginative claims about AI. Mustafa Suleyman, the Chief Executive Officer of Microsoft AI, said very recently, “Most… white-collar work… will be fully automated…within the next 12 to 18 months.” Geoffrey Hinton, the so-called “Godfather of AI” claimed last year that “AI will create “massive unemployment.” Elon Musk said in 2024 that “AI will replace all human jobs, making work optional.”

These statements are not human-centered.

The reality is more nuanced. AI is automating routine paperwork, summarizing legal documents, managing calendars, and even drafting first versions of reports or presentations. But AI won’t ultimately replace people. It will be augmenting them. Some company CEOs may try to replace workers with AI, but they will soon learn that the human factor can never be replaced.

The “average” worker now has access to tools that once belonged only to experts. That’s both empowering and unsettling. At the same time, there’s a danger in thinking AI can replace judgment, wisdom, or the ethical discernment that comes from human experience—especially in fields like law, healthcare, or ministry. AI can do a lot, but it can’t love, show mercy, or seek justice. So, the heart of intellectual labor—thinking, caring, deciding—remains and will always remain deeply human. The challenge is to keep those virtues at the center as we move forward.

Tell us about your course. What can students expect to learn?

I’m developing a full-year, two-semester course titled “A Catholic Introduction to Artificial Intelligence” that will be ready for the Fall 2026 and Spring 2027 semesters. This course provides a hands-on and critical introduction to Artificial Intelligence, covering its history, foundational concepts, societal impact, and ethical concerns.

Part One introduces students to the foundations of artificial intelligence, beginning with what AI is and how it developed from early automation to modern machine learning. Students will explore key concepts such as neural networks, deep learning, and large language models, and examine how AI is used across industries such as healthcare, finance, gaming, and the arts. The semester also emphasizes critical thinking about the social and ethical implications of AI, including issues of bias, privacy, surveillance, and the future of work. Students will gain hands-on experience building and working with modern AI tools like NotebookLM, Jupyter Notebook, ChatGPT, Claude, Gemini, and Google Teachable Machine.

In Part Two, students learn how large language models operate in practice by running open-source models locally using tools such as Ollama and interacting with them through interfaces like Open WebUI. They will explore prompt engineering, system prompts, tools, and functions, and experiment with AI-powered agent-based automation. The semester concludes with projects in which students design simple AI assistants and agents, giving them practical experience applying AI responsibly and creatively.

Continuing the Discussion

What are your thoughts on this topic? I invite you to join other homeschooling parents and me in the Homeschool Connections Community or our Facebook group.