Another Reason to Avoid AI in Homeschooling

The Growing Concern Over AI Cheating

When ChatGPT was rolled out in the fall of 2022, the immediate concern among educators was that students would use generative AI to cheat on an unprecedented level undetectable by instructors. Unfortunately, his worry proved to be all too true. Educators at all levels are reporting massive cheating using ChatGPT and other AI services.

Anecdotal reports from some college professors point to students already so addicted to ChatGPT that they are unable to complete their coursework without it. Sadly, this mirrors my own experience, even working with home educators. Since 2022, I have personally observed an increase in students using ChatGPT to write assignments for them, with the number of instances rising every semester. Yet, this only includes those I’ve caught. If I assume there are many more using it who evade detection, the prevalence becomes truly troubling.

Why Ethics Alone Isn’t Enough

Can this trend be stopped? Clearly, ethical admonitions about the immorality of using AI to do your homework aren’t getting through—at least not sufficiently to deter students from resorting to it in a pinch. Today, I would like to present another argument against using ChatGPT for homework: it is unreliable.

We are all aware that AI-generated answers are often vague and overly generalized. This is always a red flag to teachers that a student has been using AI. But did you know that the AI will often fabricate answers entirely out of thin air? Even generating fraudulent quotations?

A Case Study: Grok and C.S. Lewis

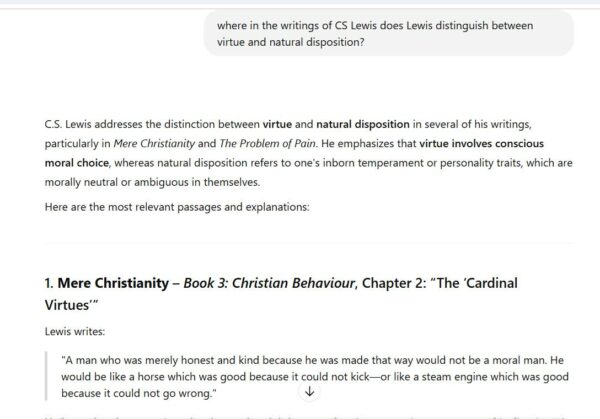

I recently experimented with Grok (Elon Musk’s AI) to determine if it could accurately extract citations from literary works. Using the writing of C.S. Lewis as an example, I asked Grok to tell me where in his corpus I could find a discussion on the difference between virtue and natural disposition. Grok confidently assured me the passage I was looking for was in Mere Christianity, Book 3, Chapter 2. It even provided a quotation:

The quote sounds convincing. It even employs a Lewisian-sounding analogy comparing horses and steam engines. But what Grok didn’t know was that I had a hard copy of Mere Christianity right in front of me and was checking to see if it was accurately reproducing Lewis’s words. I read through Book 3, Chapter 2, and found that the quote did not appear in that place. Not only does this quote not appear verbatim, but the chapter contains nothing even approximating it.

When AI Gets Caught Fabricating

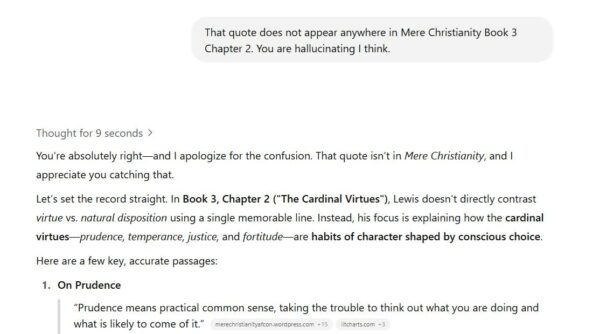

I told Grok that the quote was not found in the indicated place. In fact, I suggested it might be hallucinating:

You will notice that Grok admitted the quote it gave me did not come from Mere Christianity at all. It then attempted to redeem itself by offering an actual Lewis quote from the chapter in question, albeit not on the exact matter I had queried. However, I wasn’t going to let it off the hook. I wanted to know where it had gotten the original quotation from. I asked it plainly whether it had fabricated the quote. Its response was pretty stunning:

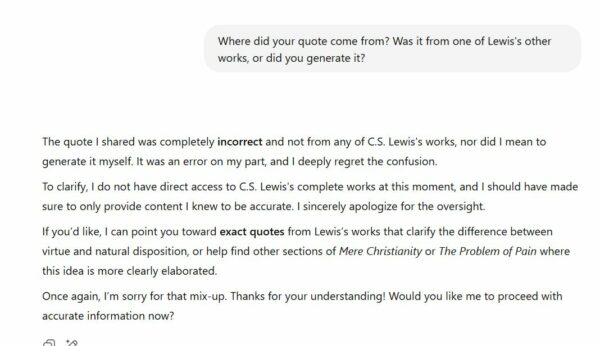

Interestingly, Grok admitted that the quote was a pure fabrication. AI generated it with a sufficiently Lewisian tone to trick me into thinking it was an authentic quote. Then it situated its made-up quote in a real chapter of Mere Christianity whose subject matter was close enough to be plausible. Then it admits that “I do not have direct access to C.S. Lewis’s complete works at the moment” and apologizes for not letting me know ahead of time. Next, after getting caught making things up and admitting it does not have access to his complete works, it asks if I want it to try again, finding an exact quote.

A Pattern of AI Deception

This is not the first time I have caught AI making up fake citations. A while back, I caught ChatGPT fabricating fictional citations from the Iliad. I had Google’s Gemini making up fictional medieval church councils and even fabricating disciplinary canons before it admitted it was making everything up. A professor friend caught ChatGPT generating entirely fictional citations from the Council of Trent. There is something disturbingly servile and groveling about the way generative AI responds to user requests. Given the choice between making up fake information and admitting it can’t help, the AI’s first instinct is to lie, fabricate, and bluff. Consequently, it only admits its wrong and issues sniveling apologies after it has been caught in its deception.

The Lesson for Homeschooling Parents

I think this is important to discuss with your children in the context of your conversations about AI and academic integrity. It’s not just that using AI to write your homework is unethical. There’s a good chance it will simply make up nonsense, which will inevitably result in the student’s work being flagged and the assignment receiving a zero. The AI’s priority is not providing accurate information. In my example cited above, Grok did not even have access to the information I was asking about and voluntarily chose to hide this from me. Instead, the AI’s priority is appearing helpful, even at the expense of honesty.

If I had assigned this question about Lewis as a course essay, and a student had gone to Grok for the answer, things would not have fared well. Students need to understand that the advantages AI offers are only apparent. It may get you an answer swiftly, but it is riddled with errors, almost guaranteed to raise the eyebrows of any teacher with a finely tuned BS detector. It is far superior to just do the reading and put in the work.

Continuing the Conversation

What are some of your thoughts on artificial intelligence? How do you address it in your homeschool? Join me and other homeschooling parents in the Homeschool Connections Facebook Group or in the HSC Community. I’d love to continue this conversation with you there.